Accessing data on the compute servers

Where are the data located?

Large data sets are located in the

/data/

directory. Typically, you should not depend on this directly, but use an environment variable DATADIR that is set to this directory and your code should use this environment variable to find the location of the top data directory. The same holds for the individual large data sets described below. All of these data might get moved around in the future, so by relying on environment variables your code will be resilient against such changes.

APOGEE data

The APOGEE data are located at $DATADIR/sdss/apogee following the layout described here. To use this data, you should set an environment variable SDSS_LOCAL_SAS_MIRROR to point to $DATADIR/sdss/apogee. This wil allow use of the apogee package, but will also be more generally useful.

We can download the APOGEE data with commands like

rsync -avz --include='*/' --include='*/*/' --include='apStar-*.fits' --include='asStar-*.fits' --exclude='*' rsync://SDSS_DATA_USERNAME@dtn01.sdss.org/sas/SDSS_DATA_RELEASE/apogee/spectro/redux/SDSS_DATA_REDUX/stars/ .

for apStar/asStar files in the stars/ directory and

rsync -avz --include='*/' --include='*/*/' --include='aspcapStar-*.fits' --include='allStar*.fits' --include='allVisit*.fits' --exclude='*' rsync://SDSS_DATA_USERNAME@dtn01.sdss.org/sas/SDSS_DATA_RELEASE/apogee/spectro/aspcap/SDSS_DATA_REDUX/SDSS_ASPCAP_REDUX/ .

for aspcapStar files and the allStar and allVisit files. In these commands, SDSS_DATA_USERNAME is the general SDSS username for accessing data and SDSS_DATA_RELEASE, SDSS_DATA_REDUX, and SDSS_ASPCAP_REDUX are the reduction identifiers (e.g., r12 and l33 for DR16). [Note that these commands have not been tested (much)].

Gaia data

The Gaia data are located at $DATADIR/Gaia following the layout of the Gaia data archive. To use this data as part of the gaia_tools package, you should set an environment variable GAIA_TOOLS_DATA to point to $DATADIR/data4code/gaia_tools.

The DR1 Gaia data were downloaded with the following commands (note that the DR1 data were later moved to a sub-directory gdr1):

curl -O http://cdn.gea.esac.esa.int/Gaia/tgas_source/fits/TgasSource_000-000-0[00-15].fits curl -O http://cdn.gea.esac.esa.int/Gaia/gaia_source/fits/GaiaSource_000-0[00-19]-[000-255].fits curl -O http://cdn.gea.esac.esa.int/Gaia/gaia_source/fits/GaiaSource_000-020-[000-110].fits

The DR2 Gaia data were downloaded using

wget -r -nH -nc --cut-dirs=2 --no-parent --reject="index.html*",vot.gz http://cdn.gea.esac.esa.int/Gaia/gdr2/

although rejecting the index.html files seems not to have worked! The DR2 data are also loaded into the catalogs database as described below. We also downloaded the Gaia DR2 2MASS match using queries like

SELECT tmass_match.*, tmass.designation, tmass.ra, tmass.dec, tmass.j_m, tmass.j_msigcom, tmass.h_m, tmass.h_msigcom, tmass.ks_m, tmass.ks_msigcom, tmass.ext_key, tmass.ph_qual FROM gaiadr2.tmass_best_neighbour AS tmass_match INNER JOIN gaiadr1.tmass_original_valid AS tmass ON tmass.tmass_oid = tmass_match.tmass_oid WHERE tmass_match.source_id BETWEEN MIN_SOURCE_ID AND MAX_SOURCE_ID;

in chunks of 17 gaia_source CSV files (with source_id between

MIN_SOURCE_ID and MAX_SOURCE_ID).

2MASS, Gaia DR2, and other catalogs

2MASS data are located at $DATADIR/2mass and have been directly downloaded from the 2MASS archive. The data have been loaded into a PostgreSQL database catalogs that is running on the server (as is usual, this database is owned by the user postgres). The point-sources table is twomass_psc; the schema for this table is here. You can use it for example as

> psql catalogs -U postgres

catalogs=# select count(*) as rows FROM twomass_psc;

rows

-————

470992970

(1 row)

In Python, you can access this database using, e.g., the

psycopg2 module>>> import psycopg2

>>> conn= psycopg2.connect(“dbname=catalogs user=postgres”)

>>> cur= conn.cursor()

>>> cur.execute(“select count(*) as rows FROM twomass_psc;”)

>>> print(cur.fetchall())

[(470992970L,)]

>>> cur.close()

>>> conn.close()

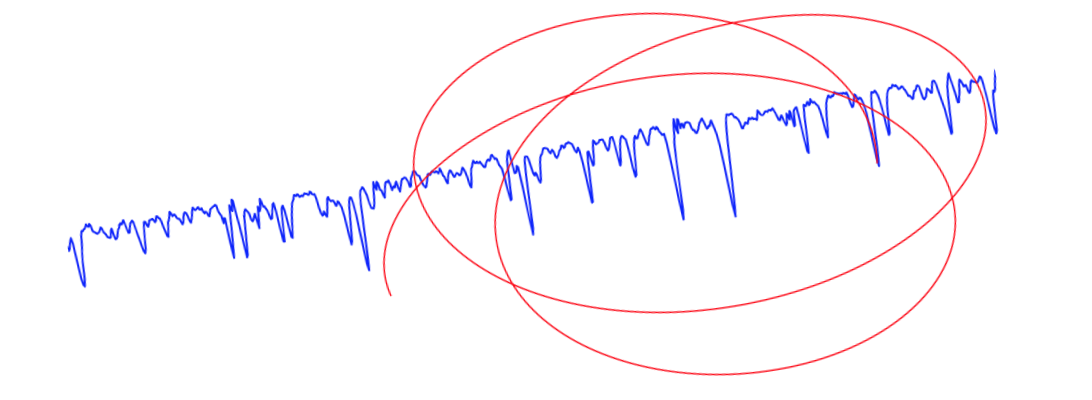

A slightly more complicated computes the number counts in 0.1 mag bins in J:

>>> cur.execute(“select floor(j_m*10), count(*) as count from twomass_psc group by floor(j_m*10);”)

>>> a= numpy.array(cur.fetchall(),dtype=‘float’).T

>>> sindx= numpy.argsort(a[0])

>>> semilogy(a[0,sindx]/10.,a[1,sindx])

Note that these queries each take about five minutes to execute. The 2MASS point-source catalog comes with a table

twomass_psc_hp12 that has the HEALPix index at level 12 in nested format for (RA,Dec). The HEALPix index is contained in hp12index and can be joined with twomass_psc on pts_key. For example, to get all stars in pixel 100 at level 10, you can run in Python

cur.execute("select ra,decl from twomass_psc, twomass_psc_hp12 where twomass_psc.pts_key = twomass_psc_hp12.pts_key and floor(hp12index/16) = 100;")

making use of the fact that pixel numbers at lower levels are simple divisions by a factor of four for each lower level.

The Gaia DR2 data have similarly been loaded into the catalogs database as table gaiadr2_gaia_source. The load command was

> psql -f gaia_source_schema catalogs

> find gaia_source/csv/ -name GaiaSource_*.csv.gz | xargs zcat | sed -e ‘/^solution_id/d’ | psql -c “copy gaiadr2_gaia_source from stdin with delimiter ‘,’ NULL as ’’” catalogs

using the schema in the $DATADIR/Gaia/gdr2 directory. The database was then configured for rapid access to random subsets of the data by doing

> psql catalogs -U postgres

catalogs=# create index random_index_idx on gaiadr2_gaia_source (random_index);

catalogs=# set maintenance_work_mem= ‘32GB’;

catalogs=# cluster verbose gaiadr2_gaia_source using random_index_idx;

The gaia_source_with_rv data was loaded into a similar table gaiadr2_gaia_source_with_rv.

The Gaia DR2 2MASS match was downloaded using the script available here and then loaded with the schema available here using

> psql -f tmass_schema catalogs -U postgres

> find tmass_best_neighbour/csv/ -name tmass_best_neighbour_*.csv.gz | xargs zcat | sed -e ‘/^source_id/d’ | psql -c “SET TRANSACTION READ WRITE; copy gaiadr2_tmass_best_neighbour from stdin with delimiter ‘,’ NULL as ’’” catalogs -U postgres

Similarly, the Gaia DR2 PanSTARRS1 match was downloaded using the script available here and then loaded with the schema available here using

> psql -f panstarrs1_schema catalogs -U postgres

> find panstarrs1_best_neighbour/csv/ -name panstarrs1_best_neighbour_*.csv.gz | xargs zcat | sed -e ‘/^source_id/d’ | psql -c “SET TRANSACTION READ WRITE; copy gaiadr2_panstarrs1_best_neighbour from stdin with delimiter ‘,’ NULL as ’’” catalogs -U postgres

Finally, the catalogs database was made read-only by default using

> psql catalogs -U postgres

catalogs=# set default_transaction_read_only= true;

but note that this can be turned off by simply running the last command with false instead. The Gaia EDR3 data in the database were loaded with similar commands and schemas as for the DR2 data as described above, except that when loading this data, the read-only default gave issues and it was necessary to alter the postgres user’s default permissions as

>psql catalogs -U postgres

catalogs=# alter user postgres set default_transaction_read_only= false;

and then to undo this after. This makes sense, since the default_transaction_read_only parameter is a session parameter, but it doesn’t make sense why this wasn’t necessary before…

To (re-)start the database, log in as the postgres user

su postgres

and start the database as

cd ~postgres && postgres -D /usr/local/pgsql/data >logfile 2>&1 &

Downloading data

If you require a large data set, it should be downloaded and kept under the DATADIR directory. Only users that are part of the datagrp group are allowed to write to DATADIR. Users are added to this group using

usermod -g datagrp USER